The risks of AI in finance have become one of the hottest debates in 2026 as banks, fintech platforms & asset managers rapidly scale up machine learning across their core operations. AI is efficient in areas of credit scoring, fraud detection, etc., but it has systemic weaknesses.

- The Shift 2026: Why Conventional Risk Frameworks are Crashing.

- Core Risk 1: AI Security Risk in Finance.

- Core Risk 2: AI in Finance Bias and the Inclusion Gap.

- Core Risk 3: AI Finance and Regulation Ethical Problems.

- Critical AI in Finance Risks and Effects.

- Intelligible AI Governance Strategies.

- Human-in-the-Loop Systems

- Transparent AI Systems

- Continuous Monitoring

- Cultured AI Governance Applications.

- AI Explainability Tools

- Fraud Detection Systems

- Bias Monitoring Solutions

- Conclusion: Performance-based versus Human Accountability.

- Frequently Asked Questions

In the world in which financial institutions have to operate today, automation is encountering uncertainty. Although AI improves speed and decision-making, new security, fairness, and accountability issues arise. Financial AI risks are no longer theoretical; they are practiced in the real world of financial systems that impact the lives of millions of people every day.

In the article, the discussion will be on the transformation of these risks, the limitations of the existing safeguards, and what the institutions should do to remain resilient in the artificial intelligence-driven future.

Also read: AI vs traditional banking creates powerful shift in fintech

The Shift 2026: Why Conventional Risk Frameworks are Crashing.

The single biggest driver behind the growing risks of AI in finance is the move from small pilot projects to full-scale AI deployment across entire financial systems. The current financial systems are dependent on continuous learning models that are in a constant state of development.

Key Structural Changes

- AI systems are up-to-date and not based on rule-based checks.

- Decisions are made within milliseconds and are not reviewed by human beings.

- Big data causes data sprawl and data control gaps.

Traditional structures were aimed at predictable systems. Non-linear risks are, however, brought about by modern AI, and traditional compliance models are not enough.

Why This Matters

The risks of AI in finance increase when:

- AI complexity is unable to be supported by legacy infrastructure.

- The behavior of models is ahead of monitoring systems.

- Institutions do not have time for auditing facilities.

This change is a radical one—risk is no longer periodic, but it is a continuous and changing phenomenon.

Core Risk 1: AI Security Risk in Finance.

Security sits at the very top of the list when it comes to the risks of AI in finance & the threat is growing on both sides of the equation. Through AI, the detection of fraud has been enhanced, and the fraudsters have also gotten the advanced tools that they use to perpetrate fraud. The full scale of this challenge is covered in detail in how AI detects fraud in banking, where the arms race between AI defenders & AI attackers is explained clearly.

Synthetic Identity Fraud

- AI is now used by criminal networks to develop:

- Uberreal, unnatural identities.

- KYC Deepfake video verification.

- Artificial intelligence generated financial history.

Such attacks bypass the conventional verification systems to form what experts call a “perfect fraud profile.”

Financial Systems Adversarial Attacks.

Even the AI models are vulnerable.

Attackers can:

- Fraud training data is used to achieve results.

- Play vulnerabilities of credit scoring systems.

- False signal trading algorithms.

The risks of AI in finance are further compounded when the models are deployed independently and are not validated by humans.

Prospect: Fraud in Industries.

AI is facilitating fraud at scale & thousands of attacks can now be launched simultaneously in a way that was simply not possible before. This redefinition of cyberspace security in finance over the decade might be due to the industrialization of deception.

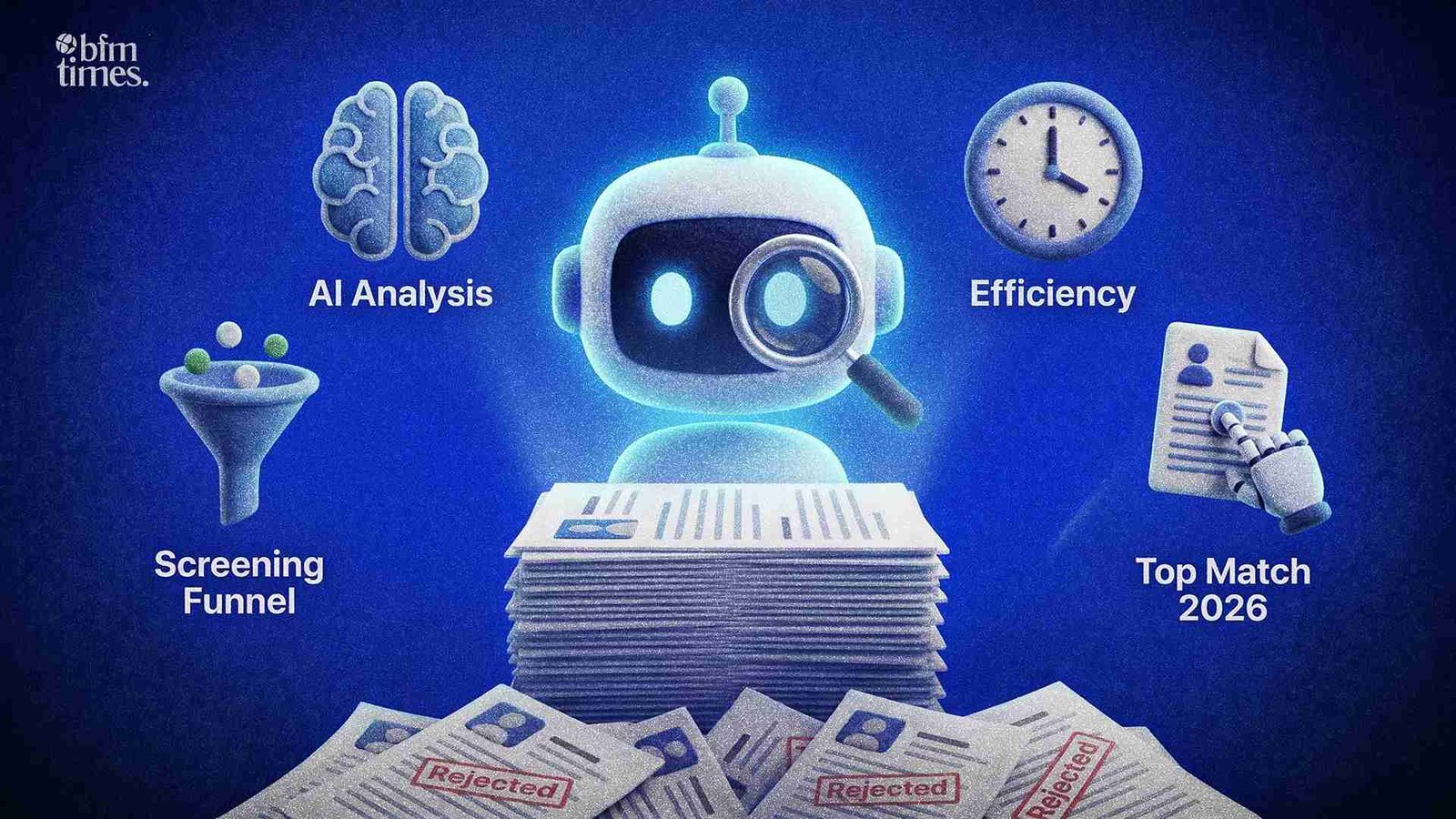

Core Risk 2: AI in Finance Bias and the Inclusion Gap.

Algorithmic bias is emerging as one of the most damaging & least visible risks of AI in finance & it strikes hardest at those who are already underserved. As fair as the data they are trained on, AI systems are. This is directly connected to how AI evaluates borrowers in loan approval, where biased training data can quietly block deserving applicants from getting credit.

Historical Bias Amplification.

In case the historical financial data is biased, AI will:

- Copy discriminatory patterns.

- Enhance discrimination in lending.

- Decrease accessibility of underserved groups.

- This forms a cycle of inequality.

Proxy Discrimination

Although there is no actual prejudice, AI can utilize indirect variables, including

- Location

- Spending behavior

- Device usage

These proxies may introduce discriminatory effects unintentionally, and this poses more risks of AI in finance and credit decisions.

Closing the Inclusion Gap

In an attempt to minimize bias, institutions are implementing:

- Artificial and balanced data.

- Fairness-aware algorithms

- Independent bias audits

The control of bias is not only an ethical issue but also a key to financial inclusivity and regulatory risk in the long-term perspective.

Core Risk 3: AI Finance and Regulation Ethical Problems.

The ethical dimension of the risks of AI in finance is growing more urgent as AI systems take on decisions that directly affect people’s financial lives with little human oversight. The third pillar of the risks of AI in finance is related to ethical issues, particularly because AI systems become more autonomous.

The Black Box Problem

Numerous AI models are not transparent, and it is hard to explain them:

- Why was the loan rejected

- The way a risk score was computed.

- What were the factors that affected a decision?

This inexplicability raises regulatory liability and risk in law.

Market Stability Risks

The AI-based trading systems present the following:

- Algorithms: Flash crashes happen due to algorithmic responses.

- Multisystem herd behavior in AI systems.

- Rapid liquidity shifts

These systemic risks bring out the way risks of AI in finance do not just affect individual institutions but also whole markets.

Regulatory Evolution

The global regulators are reacting with:

- Artificial intelligence risk management models.

- Compulsory explanatory requirements.

- Limitations on artificial decision-making.

Risks of AI in the financial sector will continue to be influenced by the pace at which companies will comply with such rules.

Critical AI in Finance Risks and Effects.

| Risk Category | Description | Impact Level | Future Risk Trend |

| Security Threats | Deepfakes, synthetic identities, AI fraud | High | Increasing |

| Model Manipulation | Adversarial attacks on AI systems | High | Increasing |

| Algorithmic Bias | Discriminatory lending and scoring outcomes | High | Stable/High |

| Lack of Explainability | Black-box decisions without transparency | Medium | Increasing |

| Market Volatility | AI-driven flash crashes and trading risks | High | Increasing |

| Regulatory Non-Compliance | Failure to meet evolving AI laws | Medium | Increasing |

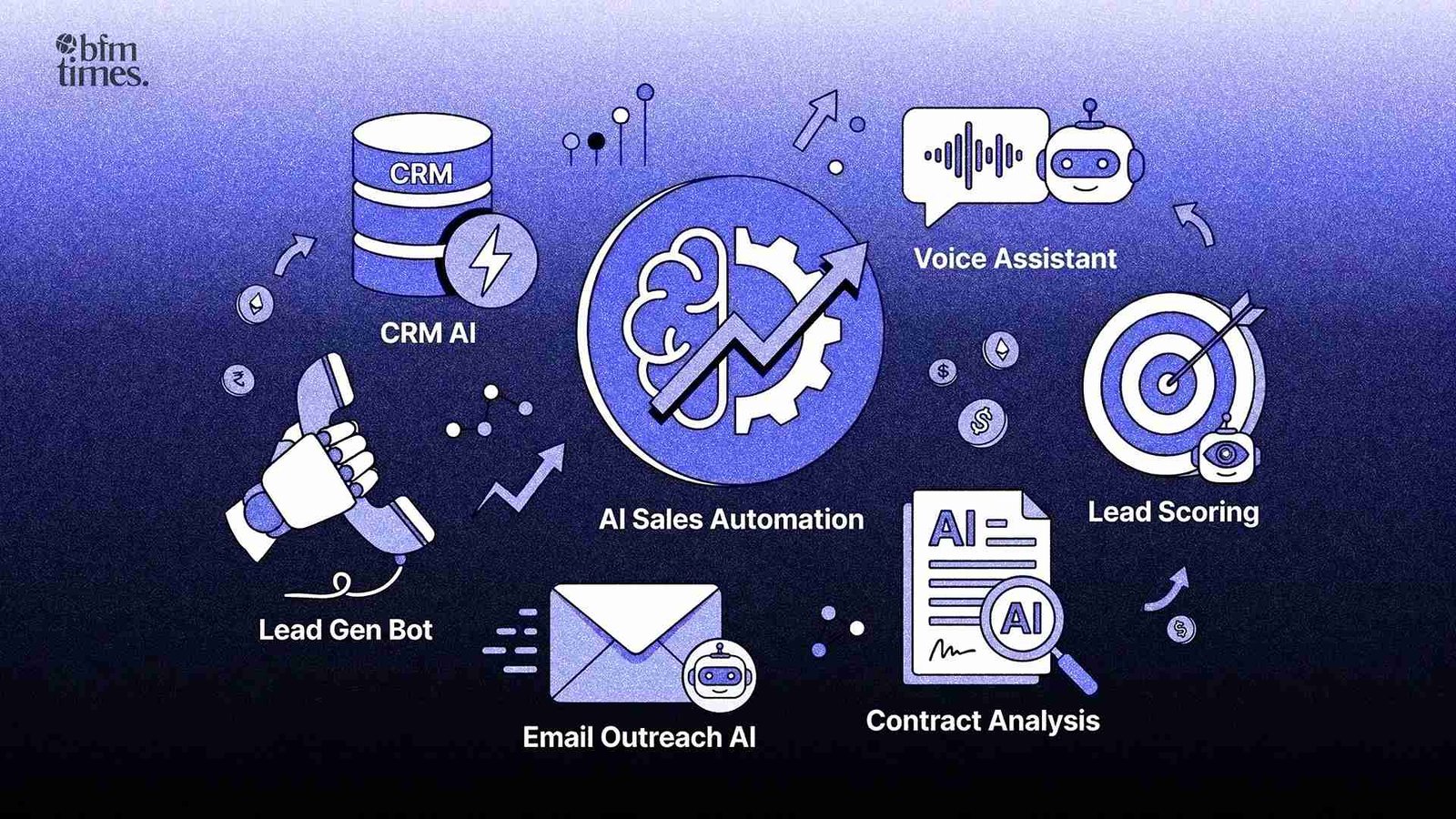

Intelligible AI Governance Strategies.

The management of the risks of AI in finance requires a structured & proactive governance approach that goes well beyond basic compliance checklists. The predictive analytics behind ML models shows exactly why continuous monitoring & human oversight are essential to keeping these systems fair & accountable.

Human-in-the-Loop Systems

Some of the most important decisions should involve:

- Human oversight

- Approval checkpoints

- Override mechanisms

Transparent AI Systems

Working institutions should explicitly specify:

- How decisions are made

- What data are used?

- Why outcomes occur

Continuous Monitoring

In comparison to conventional systems, AI will need:

- Real-time tracking

- Model performance audits

- Bias and security testing

Cultured AI Governance Applications.

Financial institutions are currently embracing dedicated platforms to deal with the risks of AI in finance & these tools are becoming essential parts of responsible AI deployment.

- Platforms of Risk Management Model Risk.

- Monitor and identify AI model performance, identify drift, and maintain compliance.

AI Explainability Tools

- Give insight into decisions to regulators and customers.

Fraud Detection Systems

- Apply behavioral analytics and anomaly detection.

Bias Monitoring Solutions

- These are platforms that are becoming important in preserving trust, compliance, and operational stability. The same accountability standards now apply to robo-advisors managing investments, where transparent AI governance is just as critical as it is in lending or fraud detection.

Conclusion: Performance-based versus Human Accountability.

The future of financial systems worldwide is getting defined by the dangers of risks of AI in finance. Where AI may be more efficient than ever, it presents more intricate security, bias, and ethics problems.

The financial institutions should stop being reactive in compliance and rather be proactive in their governance approach. The idea is not to put a halt to innovation but rather to make it responsible and sustainable.

Final Insight:

The organizations that will be the first to succeed in the AI-driven financial age are not the ones that implement AI most quickly, but those that handle the risks of AI in finance most disciplined, most transparent, and most accountable. As AI continues to reshape digital payments & every other corner of finance, getting governance right will define which institutions earn lasting trust.

Also read: AI in Digital Payments: The Future of UPI Systems

Frequently Asked Questions

What are the main risks of AI in finance?

The key risks include security threats, algorithmic bias, and lack of transparency in decision-making.

How does AI create security risks in finance?

AI enables advanced fraud methods like deepfakes and synthetic identity attacks.

Why is AI bias a concern in financial systems?

AI can amplify historical data biases, leading to unfair lending and financial exclusion.

Disclaimer: BFM Times acts as a source of information for knowledge purposes and does not claim to be a financial advisor. Kindly consult your financial advisor before investing.